At face value, customer satisfaction polling is a great thing.

At face value, customer satisfaction polling is a great thing.

As a customer, I try to respond to as many satisfaction surveys as possible for a variety of reasons. First, I think it’s respectful to provide feedback to companies and people you do business with. Second, providing feedback may improve products and services I care about or depend on. Third, as a marketer myself, I like to take satisfaction surveys so I can peel the onion on top issues and survey the techniques of other marketers.

But this month’s volume of satisfaction surveys has been overwhelming.

I just wrapped up several business and personal trips over the past few weeks, including SXSW, Disney World, and several cities in Canada and the U.S. My patronage of numerous conferences, restaurants, hotels, airlines, car-rental companies and online travel agencies has prompted a flood of customer satisfaction surveys.

I also received additional satisfaction surveys this month for other reasons. The domestic variety included car maintenance, updated Internet service plans, updated mobile phone plans, and some home contractor work. On the work front, I’ve received surveys for various professional and software vendors. I must’ve received at least 25 invites to take customer service surveys in March alone.

While they’ve long enjoyed a priority status in my inbox, survey automation tools (i.e., email, website intercepts and pop-ups and robot telephone pollsters) are increasing the volume and frequency of survey solicitations. As a result, satisfaction surveys must work harder to pass through my mental and email spam filters.

Those same automation tools are making it easier to administer longer, unwieldy surveys. If I get another robot pollster survey that requires more than 60 seconds of my life, I’ll be tempted to boycott the company behind it. It’s ironic that most of these surveys include the NPS score — i.e, “on a scale of 10, how would you recommend this service to a friend?” The NPS score is heralded by customer loyalty experts as “the one metric that matters most,” but it tends to get padded by dozens of other questions. Do they matter and warrant my time?

To make matters worse, very few online satisfaction surveys are optimized for mobile-device response.

In my recent essay for Wharton’s Future of Advertising program, I underscored that “digital breadcrumbs” will become the new research. Traditional market research — particularly representative sampling and self-reported survey techniques — will never go away. However, those clunky, interceptive methods will eventually become subservient to the gathering and interpretation of large, passively gathered data sets that don’t represent populations, but are the actual measured behaviors of populations and individuals.

It is time for marketing professionals to embrace this concept for taking the pulse of customer satisfaction. In our increasingly byte-sized world, the interceptive survey variety won’t sustain as its volume and length keep increasing.

When self-reported surveys are mandatory, marketers and loyalty professionals must do a better job of keeping their surveys short, mobile-friendly and rewarding.

This essay also ran in MediaPost.

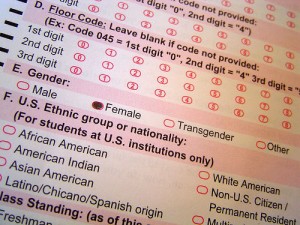

(Photo: roboppy)

When you ask customers to fill out a survey make sure you make it worth their time! Keep it short, concise and ask the questions you really need answers to. Most customers won’t give you more than a minute or two so really hone in on what you need to know.

Thanks for sharing this. I use SoGoSurvey’s online survey software for all my online survey needs. They have a wide range of sample surveys to choose from, which makes the task of creating surveys easier. I highly recommend SoGoSurvey!